某局点S5560X-EI 无法建立组播表项问题案例

- 0关注

- 0收藏 1808浏览

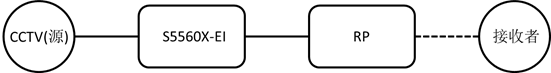

组网及说明

拓扑:如下图所示,S5560X-EI左侧三层虚接口起PIM SM跑三层组播,RP和接收者在右侧。

问题描述

问题:开局部署组播业务,发现S5560X-EI上没有组播源侧的(S,G)表项,无法上线业务。另外现场已部署了近300台和S5560X-EI同等位置的设备,业务均正常。

过程分析

分析:

查看表项,发现RP信息正常,但是确实没有组播(S,G)表项:

<ALTNPAL06SURVASW31>dis pim vpn-instance 13 rp-info

BSR RP information:

Scope: non-scoped

Group/MaskLen: 224.0.0.0/4

RP address Priority HoldTime Uptime Expires

10.110.5.3 140 180 6d:16h 00:02:43

10.110.5.4 160 180 6d:16h 00:02:43

<ALTNPAL06SURVASW31>dis pim vpn-instance 13 routing-table

Total 3 (*, G) entries; 1 (S, G) entries

(*, 239.128.66.23)

RP: 10.110.5.3

Protocol: pim-sm, Flag: WC

UpTime: 00:12:33

Upstream interface: Vlan-interface3017

Upstream neighbor: 10.110.6.157

RPF prime neighbor: 10.110.6.157

Downstream interface information:

Total number of downstream interfaces: 1

1: Vlan-interface3671

Protocol: igmp, UpTime: 00:12:33, Expires: -

检查配置发现连组播源侧接口绑定了VPN,虚接口正确启用了PIM SM,全局也使能了三层组播路由功能:

#

multicast routing

##

multicast routing vpn-instance 13

#

interface Vlan-interface3666

description ELV-L3A-Clinet1

ip binding vpn-instance 13

ip address 10.109.66.254 255.255.255.0

pim sm

igmp enable

#

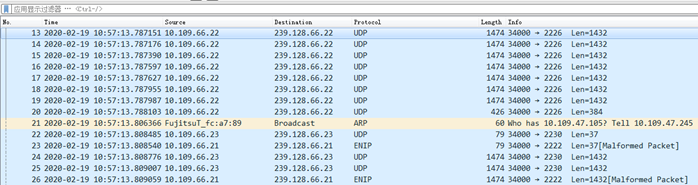

怀疑组播报文没进来设备,或者设备没把报文上送CPU处理,但是抓包和查看接口流量类型可以确认有组播数据一直发给设备:

<ALTNPAL03AASW32>dis int GigabitEthernet 1/0/3

GigabitEthernet1/0/3

Current state: UP

Line protocol state: UP

IP packet frame type: Ethernet II, hardware address: 4ce9-e441-7840

Description: GigabitEthernet1/0/3 Interface

Bandwidth: 100000 kbps

Loopback is not set

Media type is twisted pair

Port hardware type is 1000_BASE_T

100Mbps-speed mode, full-duplex mode

Link speed type is autonegotiation, link duplex type is autonegotiation

Flow-control is not enabled

Maximum frame length: 10000

Allow jumbo frames to pass

Broadcast max-ratio: 100%

Multicast max-ratio: 100%

Unicast max-ratio: 100%

PVID: 3666

MDI type: Automdix

Port link-type: Access

Tagged VLANs: None

Untagged VLANs: 3666

Port priority: 0

Last link flapping: 19 hours 6 minutes 7 seconds

Last clearing of counters: Never

Peak input rate: 798232 bytes/sec, at 2013-01-08 18:11:39

Peak output rate: 8907637 bytes/sec, at 2013-02-20 21:19:22

Last 300 second input: 578 packets/sec 795200 bytes/sec 6%

Last 300 second output: 145 packets/sec 10225 bytes/sec 0%

Input (total): 2294378364 packets, 3231071335362 bytes

1656443292 unicasts, 46 broadcasts, 637935026 multicasts, 0 pauses

Input (normal): 2294378364 packets, - bytes

1656443292 unicasts, 46 broadcasts, 637935026 multicasts, 0 pauses

Input: 0 input errors, 0 runts, 0 giants, 0 throttles

0 CRC, 0 frame, - overruns, 0 aborts

- ignored, - parity errors

Output (total): 9942099630 packets, 11828137038671 bytes

864476789 unicasts, 4457196 broadcasts, 9073165645 multicasts, 0 pauses

Output (normal): 9942099630 packets, - bytes

864476789 unicasts, 4457196 broadcasts, 9073165645 multicasts, 0 pauses

Output: 0 output errors, - underruns, - buffer failures

0 aborts, 0 deferred, 0 collisions, 0 late collisions

0 lost carrier, - no carrier

于是进一步debug pim all,发现没有任何打印,可以明确报文没有上送cpu处理。

因此怀疑底层的组播缺省路由出错导致,拉上芯片厂家一起远程排查发现确实是软件问题。

定位结论:

驱动下发VPN内的default MC route未对routeType赋值,采用了默认的ECMP类型(ECMP index = 2,一条path nexthop index=2,nh指向MLL复制组播数据到CPU,这时default MC route是生效的),在生成单播ECMP路由之后,index=2的ECMP被单播路由使用而重写为next hop=7696的单播nh(动作是route),这之后由于ECMP 2的nexthop被改成单播nh,VPN下的default MC route不再能够traptocpu而失效,这也是问题不是必现而需要触发单播ECMP之后而复现的原因。

GT_STATUS cpssDxChIpLpmVirtualRouterAdd

(

IN GT_U32 lpmDBId,

IN GT_U32 vrId,

IN CPSS_DXCH_IP_LPM_VR_CONFIG_STC *vrConfigPtr

)

解决方法

解决方法:

1、修改寄存器表项规避。

2、出版本彻底解决。

该案例对您是否有帮助:

您的评价:1

若您有关于案例的建议,请反馈:

该案例暂时没有网友评论

编辑评论

✖

案例意见反馈

亲~登录后才可以操作哦!

确定你的邮箱还未认证,请认证邮箱或绑定手机后进行当前操作