M9008-S设备丢包问题经验案例

- 0关注

- 1收藏 1985浏览

组网及说明

M9008-S做出口设备

问题描述

现场反馈使用内网终端在ping这台M9K设备的内网口或ping外网DNS地址时,都会出现不同程度的丢包,丢包率在1%-5%之间浮动,使现场用户在使用业务时感受到了卡顿现象。

过程分析

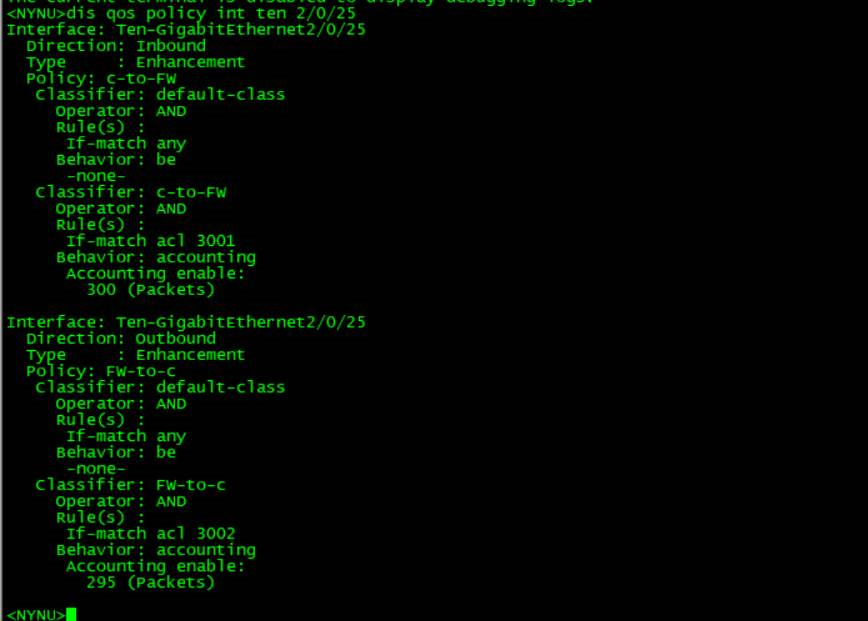

建议现场ping设备内网口2/0/25固定个数的报文,根据ping操作记录和流量统计结果显示,M9K设备inbound方向接收到了300个报文,outbound方向回复了295个报文,确认报文是丢在了M9K上:

查看设备CPU及内存都在正常范围内:

===============display cpu===============

Slot 0 CPU 0 CPU usage:

5% in last 5 seconds

2% in last 1 minute

3% in last 5 minutes

Slot 2 CPU 0 CPU usage:

11% in last 5 seconds

11% in last 1 minute

11% in last 5 minutes

Slot 3 CPU 0 CPU usage:

7% in last 5 seconds

7% in last 1 minute

7% in last 5 minutes

Slot 3 CPU 1 CPU usage:

8% in last 5 seconds

7% in last 1 minute

8% in last 5 minutes

===============display memory===============

Memory statistics are measured in KB:

Slot 0:

Total Used Free Shared Buffers Cached FreeRatio

Mem: 4043532 1212768 2830764 0 2380 240944 71.5%

-/+ Buffers/Cache: 969444 3074088

Swap: 0 0 0

Slot 2:

Total Used Free Shared Buffers Cached FreeRatio

Mem: 999872 287976 711896 0 0 26220 71.3%

-/+ Buffers/Cache: 261756 738116

Swap: 0 0 0

Slot 3:

Total Used Free Shared Buffers Cached FreeRatio

Mem: 999872 287696 712176 0 0 26216 71.4%

-/+ Buffers/Cache: 261480 738392

Swap: 0 0 0

Slot 3 CPU 1:

Total Used Free Shared Buffers Cached FreeRatio

Mem: 16412828 6526272 9886556 0 2780 673224 62.9%

-/+ Buffers/Cache: 5850268 10562560

Swap: 0 0 0

且流量是从内网口进入的,查看配置内网口上及设备本身未做什么特殊配置

后续在排查中发现,M9K的内网口2/0/25对应的slot3,通过display process cpu slot 3 cpu 1命令发现了单核被打满的情况:

501 0.0% 0.0% 0.1% [kdrvdp38]

502 2.3% 2.3% 0.1% [kdrvdp39]

503 0.1% 0.1% 0.1% [kdrvdp40]

504 2.3% 0.0% 0.1% [kdrvdp41]

该插卡型号为NSQM2FWDSCA0,为防火墙业务单板,该单板共有48核,如上信息显示39和41两个单核已经达到了2.3%的cpu使用率,即单核已经被打满,怀疑是单核被打满导致丢包现象的产生

让现场通过 display session top-statistics命令查看会话统计,观察近期是否有异常的较大流量的会话产生,现场观察后反馈没有异常的会话流量

解决方法

目前观察怀疑就是瞬间单条流量较大,导致单核打满,加上现场的会话非异常会话,所以建议现场将逐流转发更改为逐包转发观察测试。

逐包转发在系统视图下进行配置

forwarding policy { per-flow | per-packet }

更改为逐包转发后,现场单核的cpu使用率降到0.5%左右。改为

该案例暂时没有网友评论

编辑评论

✖

案例意见反馈

亲~登录后才可以操作哦!

确定你的邮箱还未认证,请认证邮箱或绑定手机后进行当前操作